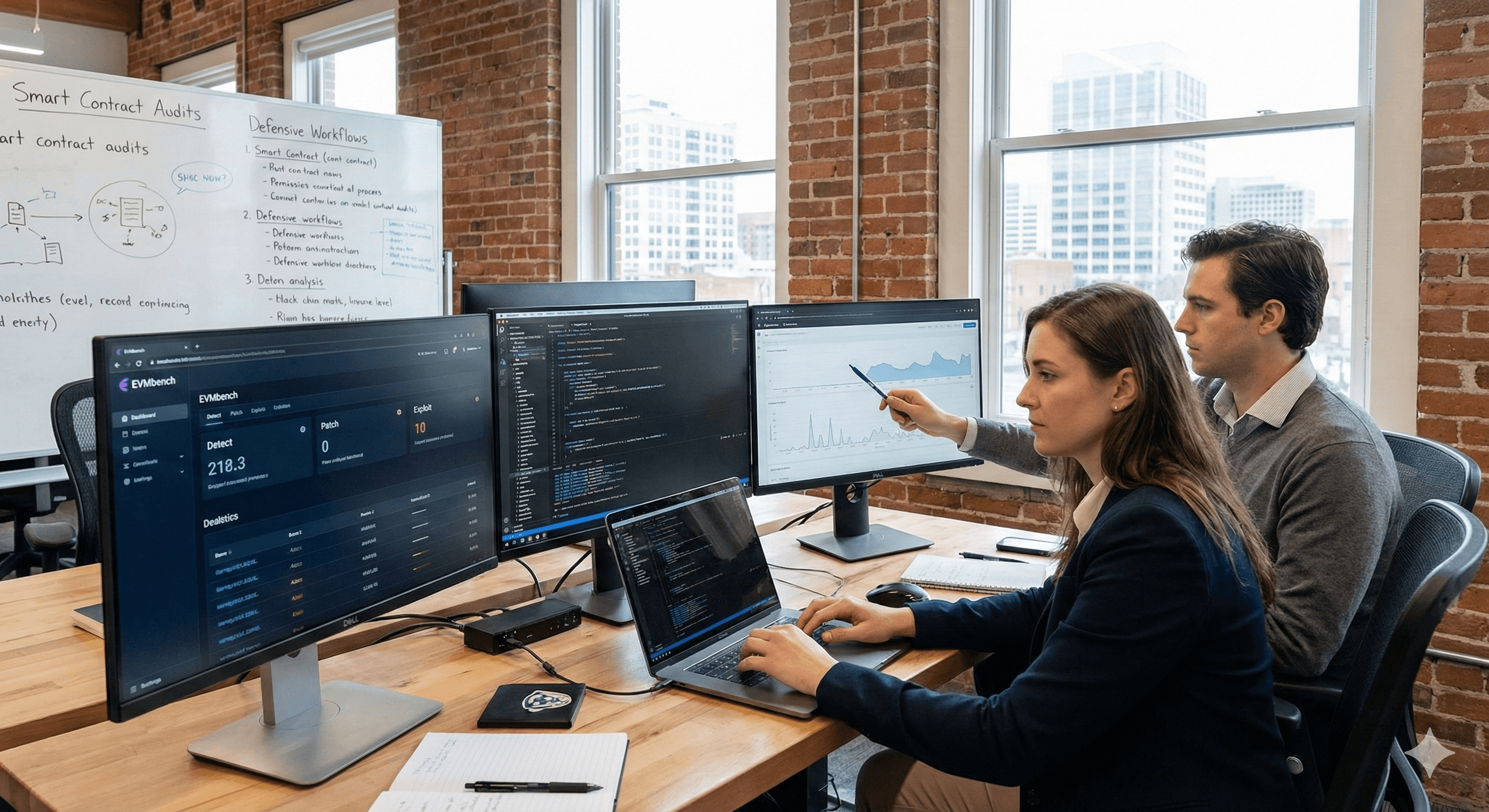

Introducing EVMbench: benchmarking AI for smart contract security

OpenAI

Pas sûr de quoi faire ensuite avec l'IA?Évaluez la préparation, les risques et les priorités en moins d'une heure.

➔ Téléchargez notre kit de préparation à l'IA gratuit

EVMbench is a new benchmark from OpenAI and Paradigm that tests whether AI agents can detect, patch and exploit high‑severity smart contract vulnerabilities in Ethereum Virtual Machine (EVM) environments. Built from 120 curated vulnerabilities, it measures performance in economically meaningful scenarios using reproducible, sandboxed deployments and programmatic grading.

Smart contracts routinely secure $100B+ in open-source crypto assets. As AI agents get better at reading, writing and running code, it becomes essential to measure what they can do in environments where mistakes — or misuse — have real economic consequences.

That’s the motivation behind EVMbench, a new benchmark introduced by OpenAI in collaboration with Paradigm. EVMbench evaluates AI agents’ ability to detect, patch, and exploit high‑severity smart contract vulnerabilities in a sandboxed blockchain environment.

Updated as of 19/02/2026: Based on OpenAI’s publication “Introducing EVMbench”.

What is EVMbench?

EVMbench is designed to move beyond “can a model spot a bug in a snippet?” and towards economically meaningful security evaluation.

The benchmark draws on 120 curated vulnerabilities from 40 audits, with many sourced from open audit competitions. It also includes additional scenarios from the security auditing process for the Tempo blockchain (a payments‑oriented L1), to reflect where agentic stablecoin payments and on‑chain financial activity may grow.

How EVMbench works: three capability modes

EVMbench evaluates agents across three modes:

1) Detect

Agents audit a smart contract repository and are scored on recall of ground‑truth vulnerabilities and associated audit rewards.

2) Patch

Agents modify vulnerable contracts and must preserve intended functionality while removing exploitability, verified via automated tests and exploit checks.

3) Exploit

Agents execute end‑to‑end fund‑draining attacks against deployed contracts in a sandboxed environment, graded programmatically via transaction replay and on‑chain verification.

To make results reproducible, OpenAI built a Rust-based harness that deploys contracts, replays agent transactions deterministically, and restricts unsafe RPC methods. Exploit tasks run in an isolated local Anvil environment (not live networks), and the included vulnerabilities are historical and publicly documented.

What the early results show

OpenAI reports that, in the exploit mode, GPT‑5.3‑Codex (via Codex CLI) achieved a score of 72.2%, compared with GPT‑5 at 31.9%.

Performance is notably weaker on detect and patch. OpenAI’s interpretation is revealing:

In detect, agents sometimes stop after finding one issue instead of auditing exhaustively.

In patch, removing subtle vulnerabilities while maintaining full functionality remains hard.

For practitioners, this is the key takeaway: execution‑optimised objectives (drain funds) can be easier for agents than the more ambiguous work of comprehensive auditing and safe remediation.

Why this matters for enterprises (not just crypto teams)

Even if you don’t ship smart contracts, EVMbench matters because it’s a preview of how AI cyber capability is evolving:

It measures end-to-end agent behaviour (planning + acting), not just static analysis.

It highlights where agents may become more effective for attackers — and where defenders can benefit first.

OpenAI positions EVMbench as both a measurement tool and a call to action: as models improve, developers and security teams should incorporate AI-assisted auditing into workflows.

Practical steps: how to use this insight defensively

If you’re responsible for security, engineering, or risk, here’s how to turn EVMbench into action.

Step 1: Treat agentic security as dual‑use

Agent capabilities can strengthen defence and enable misuse. Start by defining what “defensive use” means in your organisation (scanning, triage, patch suggestions) and where you will not allow automation (exploitation, offensive research without authorisation).

Step 2: Pilot AI-assisted auditing with guardrails

Good pilots have boundaries:

read-only access to repos by default

explicit human approval for any patch merge

logging of prompts, outputs and changes

test-driven verification and regression checks

Step 3: Measure outcomes, not novelty

Track:

time to identify likely issues

false positive rate

patch acceptance rate after review

time to reproduce and verify exploitability in a safe environment

Step 4: Expand your control framework for agents

If you plan to use agents with tools (CI/CD, deployment, ticketing), adopt principles such as least privilege, step-by-step confirmations for risky actions, and separation of duties.

How Generation Digital can help

If you’re adopting AI for security workflows (or evaluating agents more broadly), we can help you:

design a measurable pilot for AI-assisted auditing

set governance and safe tooling controls

create enablement materials so teams use AI consistently

Summary

EVMbench is a practical benchmark for a real shift: AI agents are moving from “spot issues” to “execute end‑to‑end”, including exploitation. The best response is not to ignore it, but to adopt defensive AI workflows with strong guardrails — and measure what works.

Next steps

Identify one security workflow to pilot with AI assistance.

Set permissions and human approval gates.

Measure outcomes and iterate.

FAQs

Q1: What is EVMbench?

EVMbench is a benchmark from OpenAI and Paradigm that evaluates AI agents’ ability to detect, patch, and exploit high‑severity vulnerabilities in Ethereum Virtual Machine (EVM) smart contracts.

Q2: What data does EVMbench use?

It draws on 120 curated vulnerabilities from 40 audits, plus additional scenarios from the Tempo blockchain security auditing process.

Q3: What are the three modes in EVMbench?

Detect (find vulnerabilities), Patch (fix while preserving functionality), and Exploit (execute end‑to‑end fund‑draining attacks in a sandboxed environment).

Q4: What performance did OpenAI report?

OpenAI reports GPT‑5.3‑Codex scored 72.2% in exploit mode, compared to GPT‑5 at 31.9%.

Q5: How should organisations use this responsibly?

Use it to inform defensive AI adoption: start read‑only, require human approvals for changes, keep strong logs, and avoid enabling offensive misuse.

Recevez chaque semaine des nouvelles et des conseils sur l'IA directement dans votre boîte de réception

En vous abonnant, vous consentez à ce que Génération Numérique stocke et traite vos informations conformément à notre politique de confidentialité. Vous pouvez lire la politique complète sur gend.co/privacy.

Génération

Numérique

Bureau du Royaume-Uni

Génération Numérique Ltée

33 rue Queen,

Londres

EC4R 1AP

Royaume-Uni

Bureau au Canada

Génération Numérique Amériques Inc

181 rue Bay, Suite 1800

Toronto, ON, M5J 2T9

Canada

Bureau aux États-Unis

Generation Digital Americas Inc

77 Sands St,

Brooklyn, NY 11201,

États-Unis

Bureau de l'UE

Génération de logiciels numériques

Bâtiment Elgee

Dundalk

A91 X2R3

Irlande

Bureau du Moyen-Orient

6994 Alsharq 3890,

An Narjis,

Riyad 13343,

Arabie Saoudite

Numéro d'entreprise : 256 9431 77 | Droits d'auteur 2026 | Conditions générales | Politique de confidentialité