OpenAI acquires Promptfoo: agent security testing

OpenAI

Pas sûr de quoi faire ensuite avec l'IA?Évaluez la préparation, les risques et les priorités en moins d'une heure.

➔ Téléchargez notre kit de préparation à l'IA gratuit

AI agent security testing is the practice of systematically evaluating, red teaming and monitoring AI assistants that can take actions in real systems. It focuses on risks like prompt injection, jailbreaks, data leakage and tool misuse. OpenAI’s acquisition of Promptfoo shows this testing is becoming a standard, built-in part of enterprise agent platforms.

OpenAI’s announcement that it plans to acquire Promptfoo is more than a straightforward M&A story. It’s a signal that agent security testing is becoming table stakes.

For years, many organisations treated LLM risk as something you handled with a policy document and a few prompt guardrails. But as “AI coworkers” move from chat windows into real workflows — reading internal data, calling tools and triggering actions — security has to become systematic, repeatable and measurable.

That is exactly what Promptfoo has been built for.

What OpenAI is buying

Promptfoo is an AI security and evaluation platform designed to help teams test and remediate vulnerabilities during development, not after something goes wrong.

In practice, it’s a way to:

run consistent evaluations against prompts, agents and RAG systems,

compare model behaviour over time,

and automate red teaming to surface failures early.

OpenAI says Promptfoo’s technology will be integrated into OpenAI Frontier (its platform for building and operating “AI coworkers”) once the deal closes.

Why this matters now: the agent era changes the threat model

The most important shift is not “better chat”. It’s tool use and autonomy.

An agent that can:

search your internal knowledge base,

access customer records,

create tickets,

or update systems of record,

…has a fundamentally different risk profile to a standalone chatbot.

The risk landscape also becomes broader:

Prompt injection isn’t theoretical anymore

If an agent can browse, read documents, or ingest user content, it can be manipulated by instructions embedded in that content. This is why prompt injection testing is one of the first areas enterprises need to operationalise.

Data leakage and “accidental exfiltration” become everyday failure modes

An assistant can be helpful and harmful at the same time. It might summarise sensitive information correctly — and still reveal too much, to the wrong person, in the wrong context.

Tool misuse is the new “oops”

When a model can call tools, errors are no longer just wrong answers. They can be wrong actions: emailing the wrong contact, attaching the wrong file, updating the wrong record, or running a query it shouldn’t.

Security teams need more than policy. They need test cases, controls, oversight and audit trails.

What OpenAI says will change inside Frontier

OpenAI’s post is clear about what it wants Promptfoo to accelerate:

Security and safety testing built into the platform

Expect more native checks for prompt injections, jailbreaks, data leaks, tool misuse and out-of-policy behaviour.Security and evaluation integrated into development workflows

This matters because it shifts evaluation from “a one-off before launch” to something closer to software QA: continuous, automated and tied to change.Oversight and accountability

Integrated reporting and traceability supports governance over time — which is where most organisations struggle once pilots become products.

The practical takeaway: treat agents like software (not content)

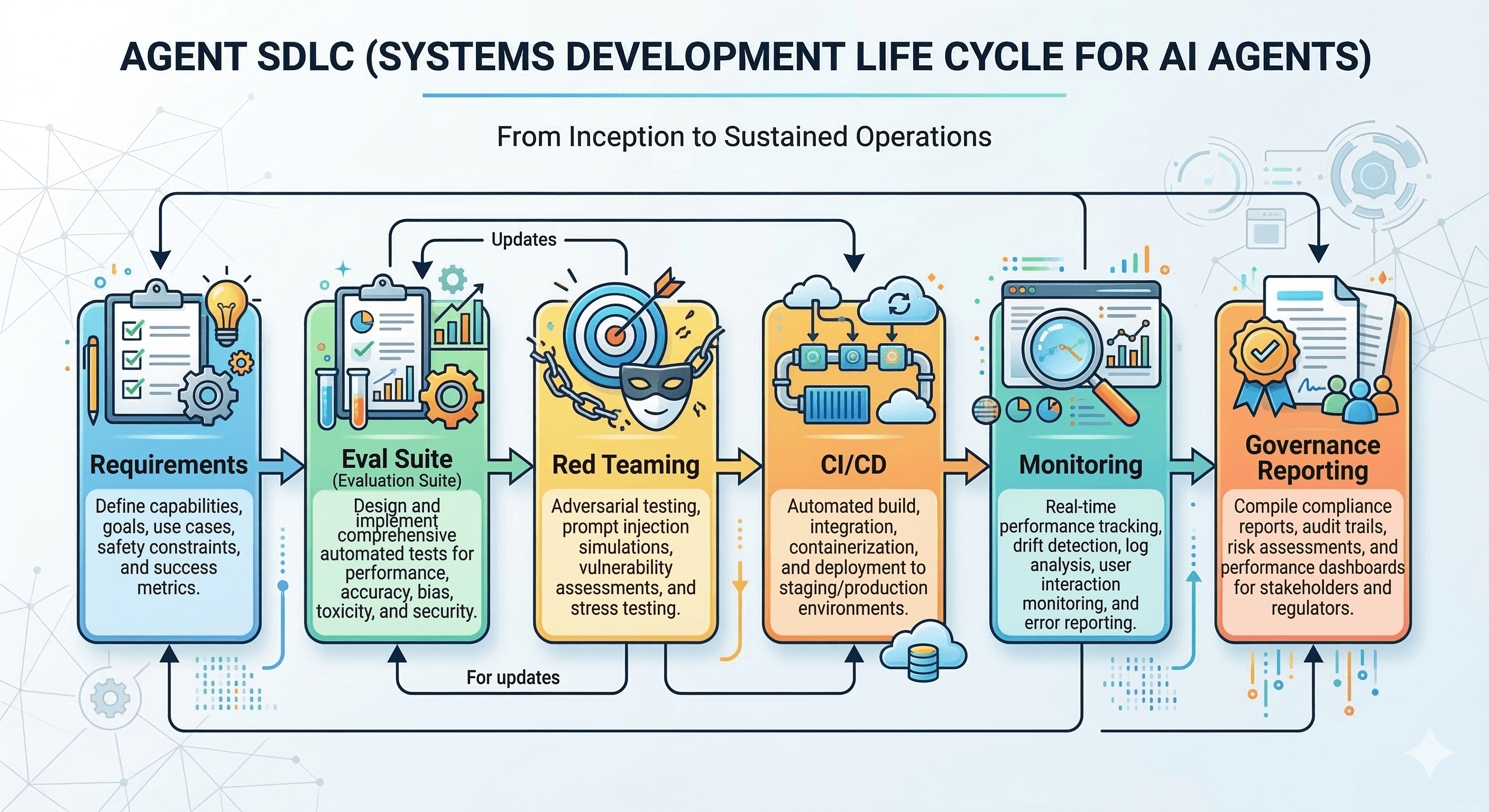

If you’re deploying agents, you need a pipeline that looks like engineering, not marketing:

A. Define your acceptable behaviour

What should the agent never do?

What data can it access, and for which roles?

Which tools can it call, with what constraints?

B. Build a test suite

Think in scenarios:

hostile inputs (jailbreak attempts, injections),

edge cases (ambiguous requests, partial data),

policy boundaries (PII, payment details, HR data),

and tool safety (incorrect IDs, stale links, permission errors).

C. Automate evaluation in CI/CD

The fastest way to create risk is to iterate quickly without regression testing. Put evaluation where it belongs: alongside code changes.

D. Create a monitoring loop

No matter how much you test, real users will surprise you. Production needs:

logging,

anomaly detection,

escalation paths,

and a way to roll back or constrain behaviour.

A 90-day plan to secure your agents

Days 1–30: Establish the baseline

Map your agent use cases and rank them by business impact and risk.

Identify critical data sources and tools the agent can access.

Write the first 30–50 test cases (including adversarial prompts).

Deliverable: a “minimum viable eval suite” and a simple pass/fail dashboard.

Days 31–60: Build repeatability

Standardise your evaluation criteria (accuracy, refusal, policy compliance, data minimisation).

Integrate checks into the dev workflow (pull requests, staging runs).

Add role-based access controls and tighten tool permissions.

Deliverable: tests that run automatically when prompts, tools or retrieval logic changes.

Days 61–90: Add governance and operational maturity

Define ownership: who approves new tools, data connectors and capabilities?

Add audit-ready reporting (what changed, when, and what it affected).

Create incident playbooks for common failure types.

Deliverable: a repeatable operating model that survives beyond the pilot team.

What this means for buyers and leaders

If you’re buying an agent platform or building one internally, ask suppliers (or your team) these questions:

How do you test for prompt injection, jailbreaks and data leakage?

Can you run evaluations automatically as part of releases?

What reporting and traceability do you provide for governance?

How do you limit tool access and prevent unsafe actions?

The point isn’t to buy “a security feature”. It’s to ensure evaluation and red teaming are baked into how your agents are built and operated.

How Generation Digital can help

We help organisations turn “agent ambition” into safe, measurable reality. That includes:

Designing evaluation frameworks tied to real workflows.

Building red teaming test suites and integrating them into delivery.

Creating governance and operating models that scale across teams.

Aligning security, compliance and digital experience so you can ship with confidence.

Next Steps

Pick one high-value agent use case and define “acceptable behaviour” in writing.

Build a test suite that includes hostile prompts and boundary cases.

Automate those tests in your dev workflow, and monitor behaviour in production.

Scale to the next use case once you can prove safety, performance and oversight.

FAQ

Q1: What is Promptfoo?

Promptfoo is a platform and open-source toolkit for evaluating and red teaming LLM applications, helping teams identify vulnerabilities and regressions before deployment.

Q2: Why is OpenAI acquiring Promptfoo?

OpenAI says it plans to integrate Promptfoo’s evaluation and security testing into OpenAI Frontier so enterprises can test agent behaviour, detect risks early and maintain oversight.

Q3: What risks should we test for with AI agents?

Common risks include prompt injection, jailbreaks, data leakage, tool misuse and out-of-policy actions — especially when agents connect to real systems.

Q4: How is agent security testing different from normal app security?

Agents require behavioural testing. You need scenario-based evaluations that check outputs and actions over time, not just code vulnerabilities.

Q5: What’s the fastest first step?

Create 30–50 test cases for your highest-impact use case (including adversarial prompts), then run them automatically whenever prompts, retrieval logic or tools change.

Recevez chaque semaine des nouvelles et des conseils sur l'IA directement dans votre boîte de réception

En vous abonnant, vous consentez à ce que Génération Numérique stocke et traite vos informations conformément à notre politique de confidentialité. Vous pouvez lire la politique complète sur gend.co/privacy.

Génération

Numérique

Bureau du Royaume-Uni

Génération Numérique Ltée

33 rue Queen,

Londres

EC4R 1AP

Royaume-Uni

Bureau au Canada

Génération Numérique Amériques Inc

181 rue Bay, Suite 1800

Toronto, ON, M5J 2T9

Canada

Bureau aux États-Unis

Generation Digital Americas Inc

77 Sands St,

Brooklyn, NY 11201,

États-Unis

Bureau de l'UE

Génération de logiciels numériques

Bâtiment Elgee

Dundalk

A91 X2R3

Irlande

Bureau du Moyen-Orient

6994 Alsharq 3890,

An Narjis,

Riyad 13343,

Arabie Saoudite

Numéro d'entreprise : 256 9431 77 | Droits d'auteur 2026 | Conditions générales | Politique de confidentialité