The Story of AI: From Theory to Agents (2026 Report)

Conceptual

Uncertain about how to get started with AI?Evaluate your readiness, potential risks, and key priorities in less than an hour.

➔ Download Our Free AI Preparedness Pack

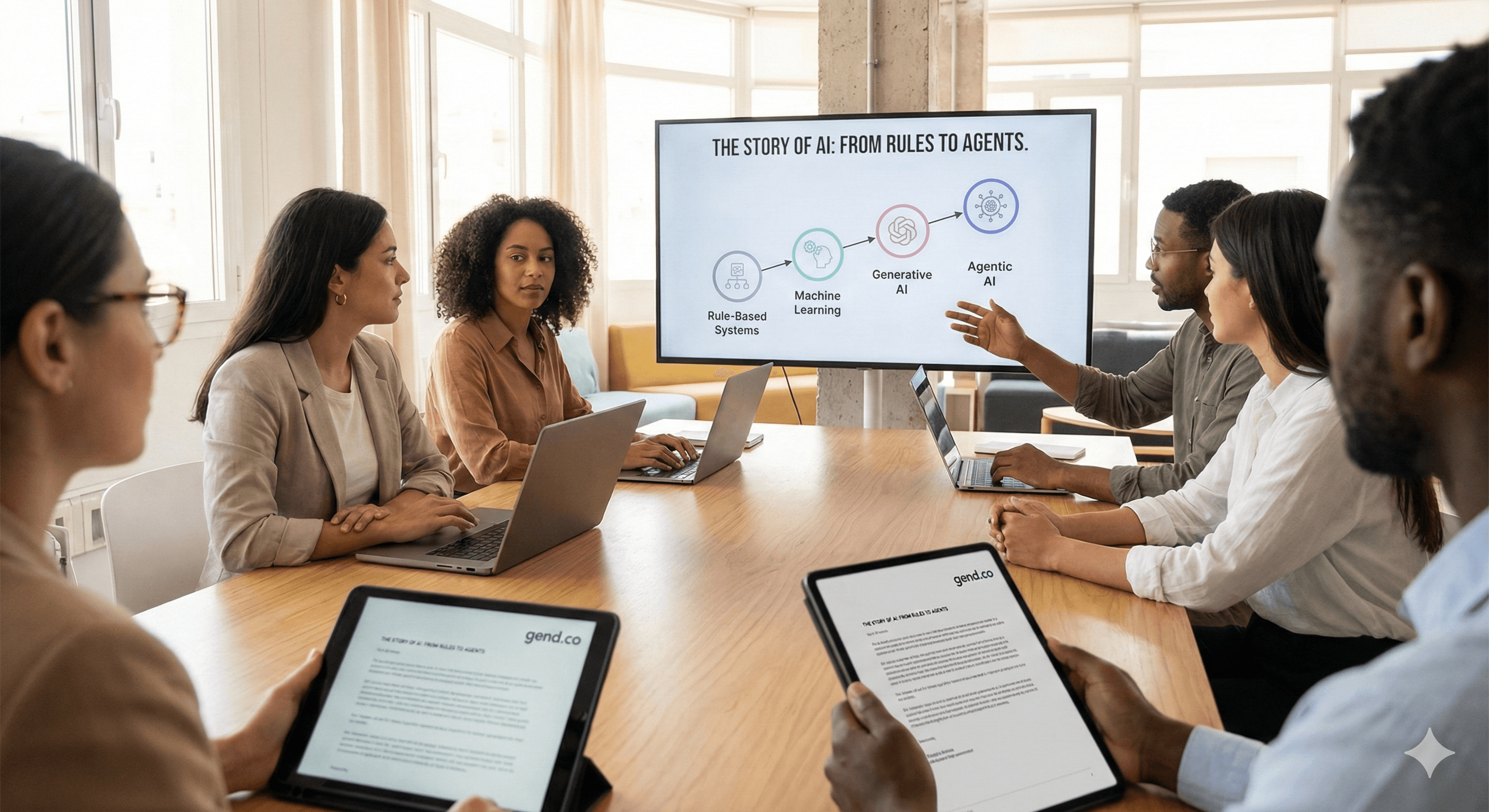

Artificial intelligence (AI) is the use of computer systems to perform tasks that normally require human intelligence—such as understanding language, recognising patterns, and making decisions. The story of AI explains how we moved from rule-based systems to machine learning, then to today’s generative AI and agents—and what that means for modern organisations.

AI isn’t new—but the way organisations can use it has changed dramatically in the last few years. What used to require specialist teams and long delivery cycles can now be prototyped in days, powered by foundation models, copilots, and increasingly, AI agents.

This report-style page tells the story of AI in a way that’s useful for business readers: what happened, why it matters now, and how to respond with the right mix of strategy, governance, and practical delivery.

Why the “story of AI” matters in 2026

Two things explain why AI feels different now.

First, adoption is no longer limited to isolated pilots. In McKinsey’s global survey, 78% of respondents reported AI use in at least one business function, up from 72% in early 2024. (mckinsey.com) Second, generative AI has shifted expectations around speed: teams increasingly expect AI to draft, summarise, analyse, and automate parts of knowledge work.

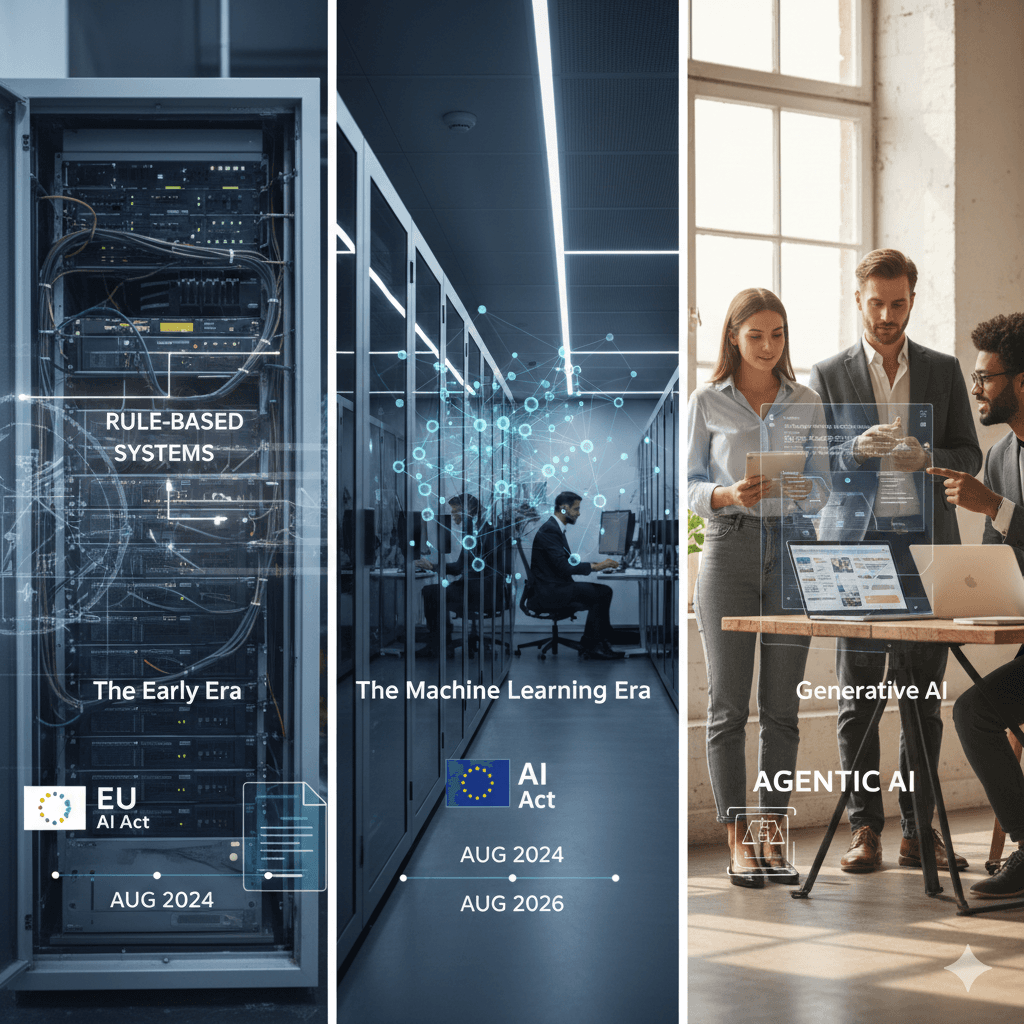

At the same time, regulation is catching up. The EU AI Act entered into force on 1 August 2024 and becomes fully applicable in August 2026, with earlier milestones for prohibited practices, AI literacy, and general-purpose AI obligations. (digital-strategy.ec.europa.eu) Even for UK-based organisations, EU rules can matter if you operate or sell into the EU.

A short timeline: how we got here

1) The early era: rules, symbols, and “expert systems”

Early AI was dominated by rule-based approaches: humans encoded logic into systems. This worked well in narrow domains, but it didn’t scale to messy real-world environments.

2) The machine learning era: learning from data

As computing power grew and more data became available, machine learning became the dominant approach. Instead of hard-coding rules, systems learned patterns from examples. This powered major advances in search, recommendations, fraud detection, and forecasting.

3) Deep learning: representation at scale

Deep learning expanded what ML could do by learning more complex representations—leading to breakthroughs in computer vision, speech recognition, and natural language processing.

4) Foundation models and generative AI

Foundation models (including large language models) brought a new interface: you can use natural language to instruct systems to generate text, code, images, and summaries.

McKinsey reports that 71% of respondents say their organisations regularly use generative AI in at least one business function, up from 65% in early 2024. (mckinsey.com) This is one reason why “AI strategy” has become a board-level conversation.

5) Agentic AI: moving from ‘assist’ to ‘do’

The newest shift is toward AI agents—systems that can plan steps, call tools, retrieve information, and complete tasks with human oversight. Notion’s 2025 releases, for example, explicitly highlight Agents and an expanding set of AI capabilities in product updates. (notion.com)

The practical change: teams stop thinking only about “AI features” and start designing AI-enabled workflows.

What’s changed for organisations (beyond the hype)

AI is now a workflow question

The difference between a good AI programme and a disappointing one is rarely the model. It’s the workflow: data access, approvals, quality checks, and how AI outputs are reviewed and improved.

Governance is becoming non-optional

If you’re operating in or selling into the EU, the AI Act’s phased requirements can affect procurement, vendor assessment, transparency, and staff training. (digital-strategy.ec.europa.eu) Even outside the EU, regulators and industry bodies are publishing expectations that increasingly converge on transparency, accountability, and risk controls.

The skills gap is real

Many teams can use AI tools, but fewer can operationalise them safely and consistently. The organisations that win tend to standardise: approved tools, prompt practices, human review criteria, and measurement.

A practical framework: how to use this report

If you’re reading this because you need to make decisions—not just learn history—use the story of AI as a diagnostic.

Step 1: Identify where AI can create value

Start with repeatable, text-heavy, or decision-support work: support tickets, policy Q&A, content operations, project documentation, internal search, or analyst workflows.

Step 2: Separate “copilot” use cases from “agent” use cases

Copilot: helps a person do the work faster (drafting, summarising, rewriting).

Agent: completes a multi-step task (collecting inputs, generating outputs, routing approvals).

Step 3: Define your governance baseline

A simple baseline many organisations adopt includes:

approved tools and models

data classification rules (what can/can’t be shared)

human-in-the-loop review requirements

auditability (what was generated, when, and by whom)

training, including AI literacy

The EU AI Act specifically calls out AI literacy obligations and staged requirements. (digital-strategy.ec.europa.eu)

Step 4: Build with measurement from day one

Define what “better” means before you ship:

cycle time reduction

quality and accuracy targets

customer satisfaction

cost-to-serve

compliance outcomes

Common pitfalls (and how to avoid them)

Pitfall 1: Treating AI as a tool rollout, not a change programme

Fix: pair enablement with workflows, examples, and leadership sponsorship.

Pitfall 2: Inconsistent data access and permissions

Fix: solve identity, permissions, and source-of-truth content before scaling.

Pitfall 3: No rules for verification

Fix: define when outputs require human validation; document escalation paths.

Pitfall 4: Over-automating early

Fix: begin with assisted workflows, then graduate to agentic automation once quality is stable.

What’s next

The story of AI is now being written in organisations that treat AI as an operating model upgrade: faster decisions, better knowledge access, and more scalable delivery.

If you want to turn this narrative into a plan, the next step is to map 3–5 high-value workflows, define your governance baseline, and run a measured pilot with real users.

Next steps

Pick one workflow with clear ROI and low regulatory risk.

Standardise what tools are allowed and what data is permitted.

Build a pilot with human review and success metrics.

Document learnings and scale to the next workflow.

FAQ

Q1. What is the difference between AI, machine learning and generative AI?

AI is the umbrella term. Machine learning is a subset where systems learn from data. Generative AI is a type of AI that creates new content (text, images, code) based on patterns learned from large datasets.

Q2. Why did generative AI adoption accelerate so quickly?

Because it lowered the barrier to entry: people can interact in natural language and get useful outputs quickly. Surveys show substantial growth in regular generative AI use across business functions. (mckinsey.com)

Q3. What are AI agents, and why do they matter?

Agents can plan and execute multi-step tasks, often by calling tools and retrieving information. They matter because they shift AI from “helping” to “doing”, changing how teams design workflows. (notion.com)

Q4. When does the EU AI Act apply, and what should organisations do now?

The AI Act entered into force on 1 August 2024 and becomes fully applicable in August 2026, with phased obligations starting earlier. Organisations should begin tool/vendor assessment, AI literacy, and risk-based governance now. (digital-strategy.ec.europa.eu)

Q5. What’s the safest way to start using AI at work?

Start with low-risk use cases (summarisation, drafting, internal knowledge support), set clear data rules, and require human review for important decisions.

Receive weekly AI news and advice straight to your inbox

By subscribing, you agree to allow Generation Digital to store and process your information according to our privacy policy. You can review the full policy at gend.co/privacy.

Generation

Digital

Business Number: 256 9431 77 | Copyright 2026 | Terms and Conditions | Privacy Policy