Human-led AI adoption: lessons from Deloitte’s 2026 report

AI

Free AI at Work Playbook for managers using ChatGPT, Claude and Gemini.

➔ Download the Playbook

Human-led AI adoption means people stay accountable for decisions, validation and risk—while AI accelerates drafting, analysis and iteration. Deloitte applied this model to its 2026 State of AI report, using AI across planning, survey design, analysis, writing and marketing, then adding guardrails to keep outputs grounded and safe.

Enterprise AI has moved past the “can it do this?” phase. The harder question now is: can we use AI to change outcomes, without breaking trust, quality, or governance?

Deloitte recently shared a behind-the-scenes look at how its AI Institute used AI to reimagine the creation and experience of its 2026 State of AI in the Enterprise report. The detail matters because it shows something most organisations struggle to do: apply AI across a complex end-to-end process, not just in isolated tasks.

What follows is the distilled version: the operating model, where AI actually helped, what had to stay human-owned, and the lessons that translate directly to your organisation—whether you’re building internal copilots, scaling Microsoft 365 Copilot, rolling out enterprise search, or preparing for the next wave of agentic tools.

Why this matters now

Most leaders aren’t short of AI ideas. They’re short of execution capacity.

AI adoption often stalls for the same reasons:

Teams use different tools with different rules.

Outputs vary wildly in quality.

Nobody knows who is accountable when something goes wrong.

Governance arrives late, and trust erodes early.

Deloitte’s experiment is valuable because it treats AI like a transformation programme—complete with decision ownership, reviews, tooling choices, and guardrails. That’s what scales.

If you want a parallel playbook focused on governance, start with Making Enterprise AI Work (Securely) at Scale on Generation Digital (internal link: /blog/enterprise-ai-governance-security/).

The core idea: “human-led, AI-accelerated”

The simplest way to understand Deloitte’s model is this:

Humans lead where judgement, accountability and context matter.

AI accelerates where speed, iteration and comparison matter.

This isn’t a philosophical stance. It is an operating design.

In practice, it means you define:

what work stays human-owned,

what AI can draft or surface,

how validation happens,

how you prevent drift into risky or unapproved territory.

What stays human-owned

For Deloitte’s report workflow, human owners retained control of:

Direction and themes

Final survey questions

Interpretation and contextualisation of findings

Narrative validation against credible sources

Brand and risk approvals

This is the part many organisations skip. If you don’t name decision owners, AI becomes a diffuse responsibility—until it becomes a very specific incident.

Where AI accelerates

AI helped most in early-stage and high-friction tasks, such as:

Project planning and status updates

Theme brainstorming and survey draft questions

Extracting themes from survey results

Storyline iteration and market research support

Style and grammar checks

First drafts for collateral and marketing content

Notice what’s missing: AI did not replace domain expertise. It made it more productive.

Where Deloitte applied AI across the lifecycle

One useful takeaway from Deloitte’s approach is breadth. They didn’t confine AI to “writing a report”. They applied it to the full lifecycle.

1) Project management

Complex research and publication programmes typically lose time in coordination.

AI-supported planning can help by:

turning raw notes into a structured plan,

summarising decisions and risks,

drafting stakeholder updates in consistent language.

If you’re doing this at scale, don’t rely on ad hoc prompts. Create a prompt library and standard operating cadence (weekly summaries, risk logs, decision registers).

2) Survey creation and analysis

This is where AI can look impressive and still mislead.

Drafting survey questions and extracting themes can be accelerated—but only if you:

keep humans responsible for statistical integrity,

ensure sampling and definitions are consistent,

prevent “theme hallucination” (AI inventing patterns that aren’t in the data).

A simple safeguard: treat AI as a suggestion engine, then require explicit evidence for each theme.

3) Report creation and derivative assets

AI is strong at:

tightening structure,

smoothing tone,

generating first drafts,

comparing versions for consistency.

It is weaker at:

making strategic trade-offs,

understanding political nuance,

knowing what you can’t say publicly.

That’s why “human-led” matters most at the edges: judgement, risk, and accountability.

4) The report experience: grounded assistants and avatars

Deloitte also piloted an AI avatar and voice assistant to help readers navigate the 2026 report.

Under the hood, this is the pattern most enterprises need if they want trustworthy AI answers:

a conversational interface,

grounded retrieval against approved sources (RAG),

guardrails for intent, topics and content,

fallbacks when confidence is low,

monitoring and escalation pathways.

If you’re exploring enterprise AI search and grounded assistants, see Glean Implementation & AI Search Consulting (internal link: /glean/).

Engineering trust: what “guardrails” actually mean

Deloitte’s write-up makes a point that’s easy to underestimate: trust and safety were designed in, not bolted on.

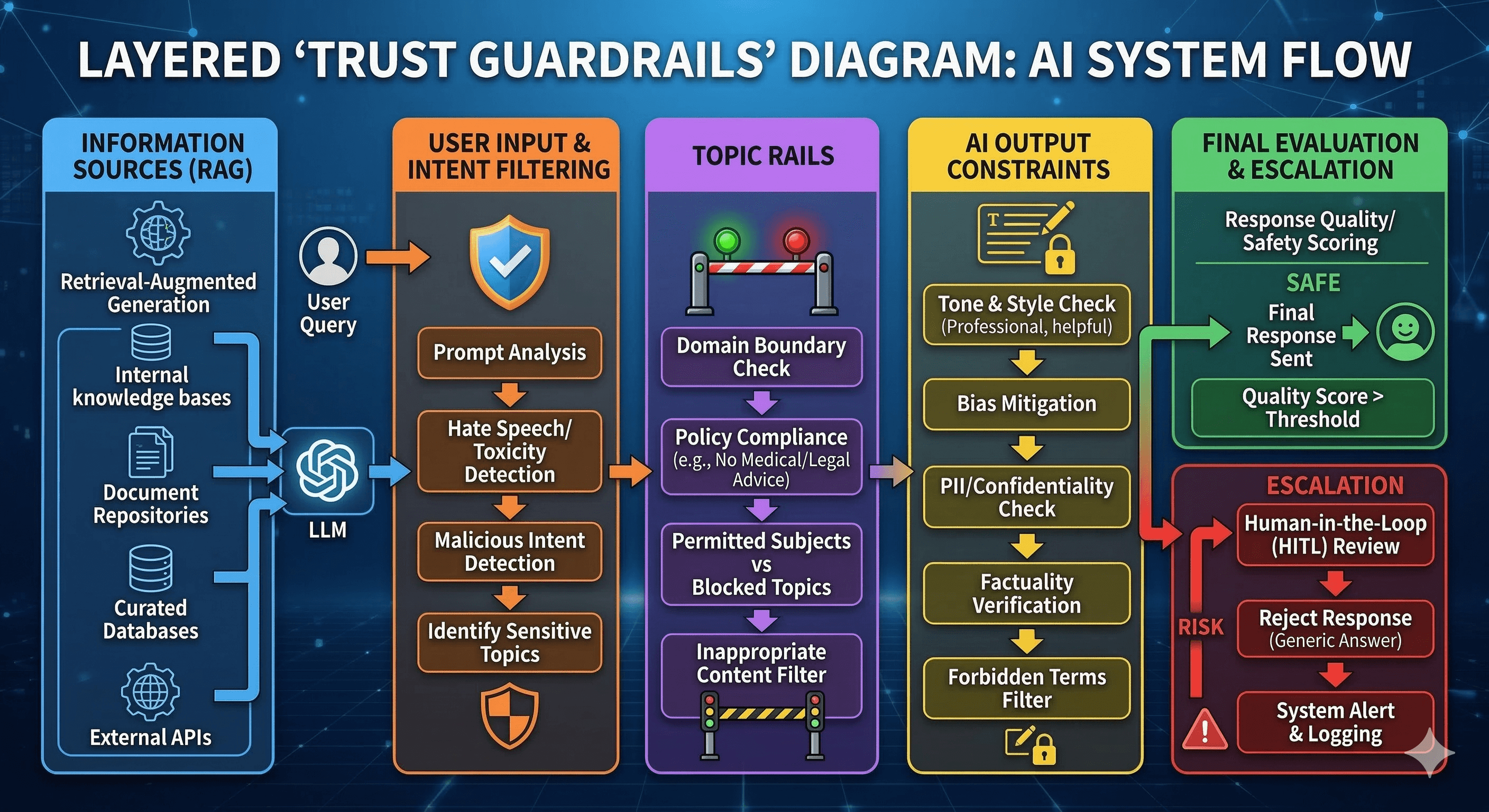

In real-world deployments, guardrails usually need four layers:

Source constraints – answers must be grounded in approved documents.

Intent constraints – the system recognises “risky” intent (e.g., legal advice, HR decisions) and routes accordingly.

Topic constraints – avoids restricted topics or provides safe, general guidance.

Output constraints – keeps responses concise, executive-appropriate, and aligned to policy.

To make this measurable, you also need evaluation. A good starting point is a weekly quality sample scored for accuracy and helpfulness (internal link: /blog/trustworthy-ai-answers-measure-what-matters/).

Lessons you can copy (without being Deloitte)

Deloitte summarised a set of “lessons forward”. Here’s how to translate them into a practical playbook.

Start with a blank slate, but align early

If you’re redesigning a flagship process, begin by asking:

What outcome are we trying to improve?

Where does time really go?

Where does risk concentrate?

Then align early on definitions and decision ownership. This is the part that prevents rework.

Make room to experiment, without breaking delivery

AI pilots fail when teams either:

promise too much too soon, or

experiment without documentation.

Build experimentation into the plan, but document what works: prompts, workflows, evaluation checks, and “no-go” zones.

Design workflows around human judgement

The goal isn’t “AI does the work”. The goal is “people do better work faster, with fewer blind spots.”

A useful split is:

humans own decisions and sign-off,

AI drafts, compares, surfaces patterns,

humans validate and reshape.

Engineer trust as a product requirement

If your AI is public-facing—or used in high-stakes internal contexts—treat trust as non-negotiable.

That means:

guardrails,

testing with adversarial prompts,

clear escalation paths,

and a governance owner who can actually say “no”.

For board-level framing, see AI Governance for Boards: Strategy, Risk & Compliance (internal link: /blog/ai-governance-evolving-board-strategies/).

Build AI fluency across the team

The hidden benefit Deloitte described is that AI improved judgement—not by being “smarter”, but by forcing teams to decide when AI is useful.

AI fluency isn’t “everyone becomes a prompt engineer”. It’s:

understanding what AI is good at,

knowing where it fails,

and being able to evaluate outputs quickly.

If you need a structured starting point, use the AI Readiness & Execution Pack (internal link: /ai-readiness-execution-pack/).

Standardise what works, stay flexible on tools

Tools change. Patterns last.

Standardise:

prompt templates,

review steps,

evaluation methods,

governance approvals,

and your knowledge grounding approach.

Then remain flexible on models and vendors.

Practical “copy this” operating model

If you want a quick model you can lift into your organisation, use this simple cadence:

Define the workflow end-to-end (not just one task).

Assign decision owners for each stage (themes, data, comms, governance).

Introduce AI accelerators where they reduce friction.

Ground outputs in approved sources.

Evaluate weekly using a small sample across teams.

Standardise prompts + checklists into repeatable playbooks.

If you also need a way to prioritise high-value AI initiatives, read How to Identify and Scale AI Use Cases (2026 Playbook) (internal link: /blog/identify-and-scale-ai-use-cases/).

Summary

Deloitte’s experiment is a helpful reminder that the organisations getting real value from AI aren’t “using tools”. They’re redesigning work.

A human-led, AI-accelerated model gives you the best of both worlds: speed and scale, without sacrificing accountability.

Next steps

If you’re scaling AI and need a governance blueprint: Making Enterprise AI Work (Securely) at Scale.

If you’re building grounded assistants or enterprise AI search: explore Glean Implementation & AI Search Consulting.

If you need an actionable readiness assessment: download the AI Readiness & Execution Pack.

FAQs

Q1: What does “human-led, AI-accelerated” actually mean?

It means people stay accountable for decisions, validation and risk, while AI supports drafting, analysis, comparison and iteration. It’s an operating model, not a slogan.

Q2: Where does AI add the most value in knowledge work?

AI is most useful where work is repetitive or high-friction: summarising, drafting first versions, comparing documents, extracting themes, and producing consistent comms—provided humans validate outcomes.

Q3: How do we prevent hallucinations in enterprise AI assistants?

Ground answers in approved sources, add intent/topic guardrails, use safe fallbacks when confidence is low, and evaluate outputs regularly with real user tasks.

Q4: What governance basics should we put in place before scaling AI?

Set ownership and accountability, define approved tools and data boundaries, create review and escalation paths, and align to a recognised risk framework.

Q5: Do we need an AI policy or an AI management system?

A policy is a start, but scaling usually requires a management system: repeatable controls, training, monitoring, and measurable KPIs that boards can oversee.

Get weekly AI news and advice delivered to your inbox

By subscribing you consent to Generation Digital storing and processing your details in line with our privacy policy. You can read the full policy at gend.co/privacy.

Generation

Digital

UK Office

Generation Digital Ltd

33 Queen St,

London

EC4R 1AP

United Kingdom

Canada Office

Generation Digital Americas Inc

181 Bay St., Suite 1800

Toronto, ON, M5J 2T9

Canada

USA Office

Generation Digital Americas Inc

77 Sands St,

Brooklyn, NY 11201,

United States

EU Office

Generation Digital Software

Elgee Building

Dundalk

A91 X2R3

Ireland

Middle East Office

6994 Alsharq 3890,

An Narjis,

Riyadh 13343,

Saudi Arabia

Company No: 256 9431 77 | Copyright 2026 | Terms and Conditions | Privacy Policy