DraftNEPABench: AI Can Speed Up NEPA Drafting by 15%

AI

Free AI at Work Playbook for managers using ChatGPT, Claude and Gemini.

➔ Download the Playbook

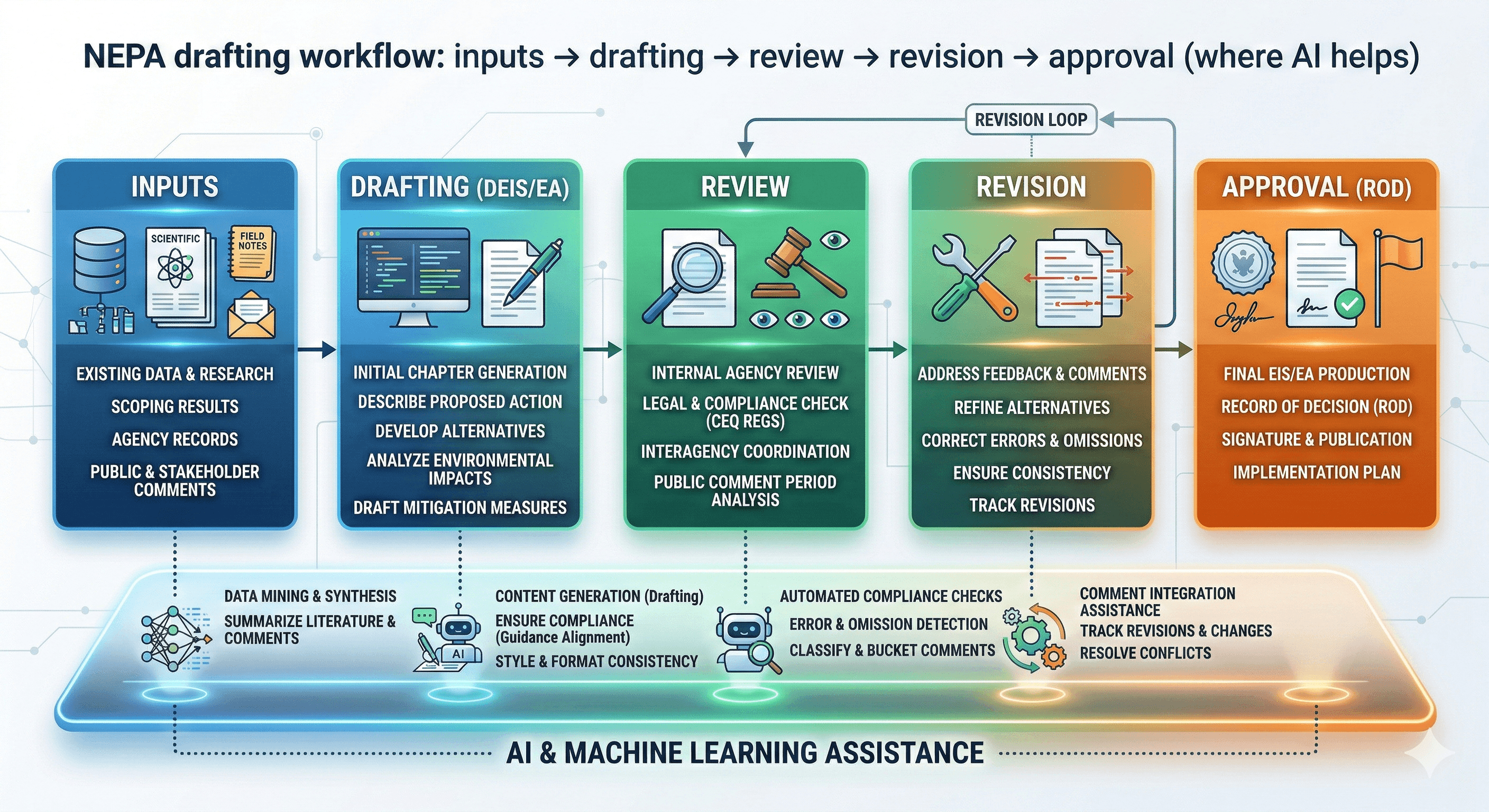

DraftNEPABench is a benchmark from OpenAI and Pacific Northwest National Laboratory that tests whether AI agents can accelerate NEPA permitting workflows. Across representative drafting tasks spanning 18 federal agencies, experts found coding agents could save 1–5 hours per subsection—up to roughly a 15% reduction in drafting time—while keeping human reviewers in control.

Permitting is one of the biggest bottlenecks in delivering infrastructure—whether that’s energy projects, transport upgrades, water systems, or new manufacturing capacity. Much of the delay is not a lack of ambition. It’s the sheer volume of technical and regulatory drafting required for environmental review.

On 26 February 2026, OpenAI and the U.S. Department of Energy’s Pacific Northwest National Laboratory (PNNL) announced DraftNEPABench: a benchmark designed to evaluate whether AI agents can meaningfully speed up NEPA drafting tasks, without compromising the accuracy and reference standards those documents require.

Early findings are promising. Across a representative set of tasks spanning NEPA document sections from 18 federal agencies, subject-matter experts found generalised coding agents could reduce drafting time by up to roughly 15%—equating to 1–5 hours saved per subsection.

What is DraftNEPABench?

DraftNEPABench is a structured benchmark for assessing how well AI models perform on document-heavy NEPA drafting tasks, such as sections of environmental impact statements.

It is built to reflect real-world requirements where drafting isn’t just writing—it involves:

reading and synthesising hundreds of pages of technical material

verifying facts across environmental, engineering and regulatory sources

producing structured outputs that meet detailed legal and technical criteria

citing the right references clearly

Why this matters now

1) Permitting speed affects national delivery capacity

When reviews take years, costs rise, projects stall, and communities wait longer for benefits.

DraftNEPABench is framed as an attempt to modernise the drafting portion of the workflow—so experts can focus more on judgement, oversight, and decision quality.

2) AI is moving into “physical world” decision chains

As AI systems influence planning and delivery in the physical world, the bar for accuracy and reference integrity rises. DraftNEPABench is designed to make those capabilities measurable.

How DraftNEPABench tests AI performance

OpenAI and PNNL’s PermitAI™ team built the benchmark with 19 subject matter experts familiar with NEPA review. Rather than testing vague “writing ability”, the benchmark focuses on well-specified drafting work where relevant context is provided.

A key implementation detail is the use of generalised coding agents (tested using Codex CLI) to unlock strong performance on research, analysis and drafting tasks that involve a file system—similar to how permitting teams work with documents and sources.

DraftNEPABench also uses a scoring approach that evaluates drafts against criteria like:

structure

clarity

accuracy

use of references

What the “15% time saving” actually means

The headline statistic can be easy to misread.

The benchmark does not claim AI completes full permitting decisions. It suggests that for drafting tasks with available context, agents can reduce the drafting workload by:

1–5 hours per subsection

up to roughly 15% reduction in drafting time

In practice, that points to an assistant model where AI handles the time-consuming first draft and evidence formatting—while humans remain accountable for judgement and final sign-off.

Practical steps for organisations exploring AI in permitting workflows

If you work with regulated, document-heavy review processes (government or industry), DraftNEPABench is useful as a reference model.

Step 1: Identify the drafting bottlenecks

Start with the parts of the workflow that are repetitive but high-effort:

summarising technical reports

cross-checking citations and references

drafting standardised sections and templates

Step 2: Establish governance before scaling

For sensitive workflows, guardrails are non-negotiable:

define which source systems are authorised

require references for factual claims

implement audit logs and version control

keep human review and sign-off mandatory

Step 3: Design the workflow for review, not autopilot

AI is most useful when it produces outputs that are easy for experts to validate.

Aim for:

structured drafts with clear headings

reference lists and traceability

clear “unknowns” or gaps flagged for humans

Step 4: Operationalise delivery with the right tools

If you want AI-enabled drafting to reduce timelines in practice, you need a clear operating rhythm:

Track tasks, owners and approvals in Asana

Maintain policy and decision logs in Notion

Use Miro for cross-functional review workshops and review maps

Improve retrieval of approved sources using Glean (where appropriate)

Limitations to keep in mind

OpenAI notes that DraftNEPABench evaluates model capability on well-specified tasks where relevant context is available. Real-world deployments involve more ambiguity, discretion, and iterative expert feedback.

The benchmark also surfaced a common issue: if references are incomplete or out of date, models may not reliably identify those discrepancies unless explicitly instructed.

How Generation Digital can help

Even if your organisation doesn’t work in US federal permitting, DraftNEPABench offers a clear blueprint for introducing AI into complex document workflows safely.

Generation Digital can help you:

evaluate AI use cases and time-saving potential

set governance and approval workflows

design “review-first” drafting systems

train teams on AI-safe writing and verification practices

Summary

DraftNEPABench is a benchmark from OpenAI and PNNL designed to test whether AI agents can accelerate NEPA drafting workflows. Early results show potential time savings of 1–5 hours per subsection—up to roughly 15%—while keeping human experts responsible for review and approval.

Next steps: If you want to apply the same principles to your own regulated documentation processes, speak to Generation Digital about workflow design, governance, and rollout.

FAQs

Q1: How does AI reduce permitting time?

AI can generate structured first drafts, synthesise long technical sources, and format references quickly—reducing manual effort for repetitive drafting steps while leaving judgement and final approval to experts.

Q2: What is NEPA?

NEPA stands for the National Environmental Policy Act, a US law requiring federal agencies to assess the environmental effects of proposed actions before making decisions.

Q3: Who developed DraftNEPABench?

DraftNEPABench was developed by OpenAI in partnership with the U.S. Department of Energy’s Pacific Northwest National Laboratory (PNNL) and its PermitAI™ team.

Q4: Does DraftNEPABench replace human reviewers?

No. The benchmark is designed to measure drafting support. The model still requires expert oversight, validation, and sign-off—especially where decisions have legal, environmental, or public impact.

Q5: What’s the key risk to manage?

Source quality. If inputs are incomplete or out of date, AI may produce confident drafts that require careful verification. Controls like reference requirements and audit logs help manage this.

Get weekly AI news and advice delivered to your inbox

By subscribing you consent to Generation Digital storing and processing your details in line with our privacy policy. You can read the full policy at gend.co/privacy.

Generation

Digital

UK Office

Generation Digital Ltd

33 Queen St,

London

EC4R 1AP

United Kingdom

Canada Office

Generation Digital Americas Inc

181 Bay St., Suite 1800

Toronto, ON, M5J 2T9

Canada

USA Office

Generation Digital Americas Inc

77 Sands St,

Brooklyn, NY 11201,

United States

EU Office

Generation Digital Software

Elgee Building

Dundalk

A91 X2R3

Ireland

Middle East Office

6994 Alsharq 3890,

An Narjis,

Riyadh 13343,

Saudi Arabia

Company No: 256 9431 77 | Copyright 2026 | Terms and Conditions | Privacy Policy