Malicious AI: Detection and Defence for Modern Teams

AI

Free AI at Work Playbook for managers using ChatGPT, Claude and Gemini.

➔ Download the Playbook

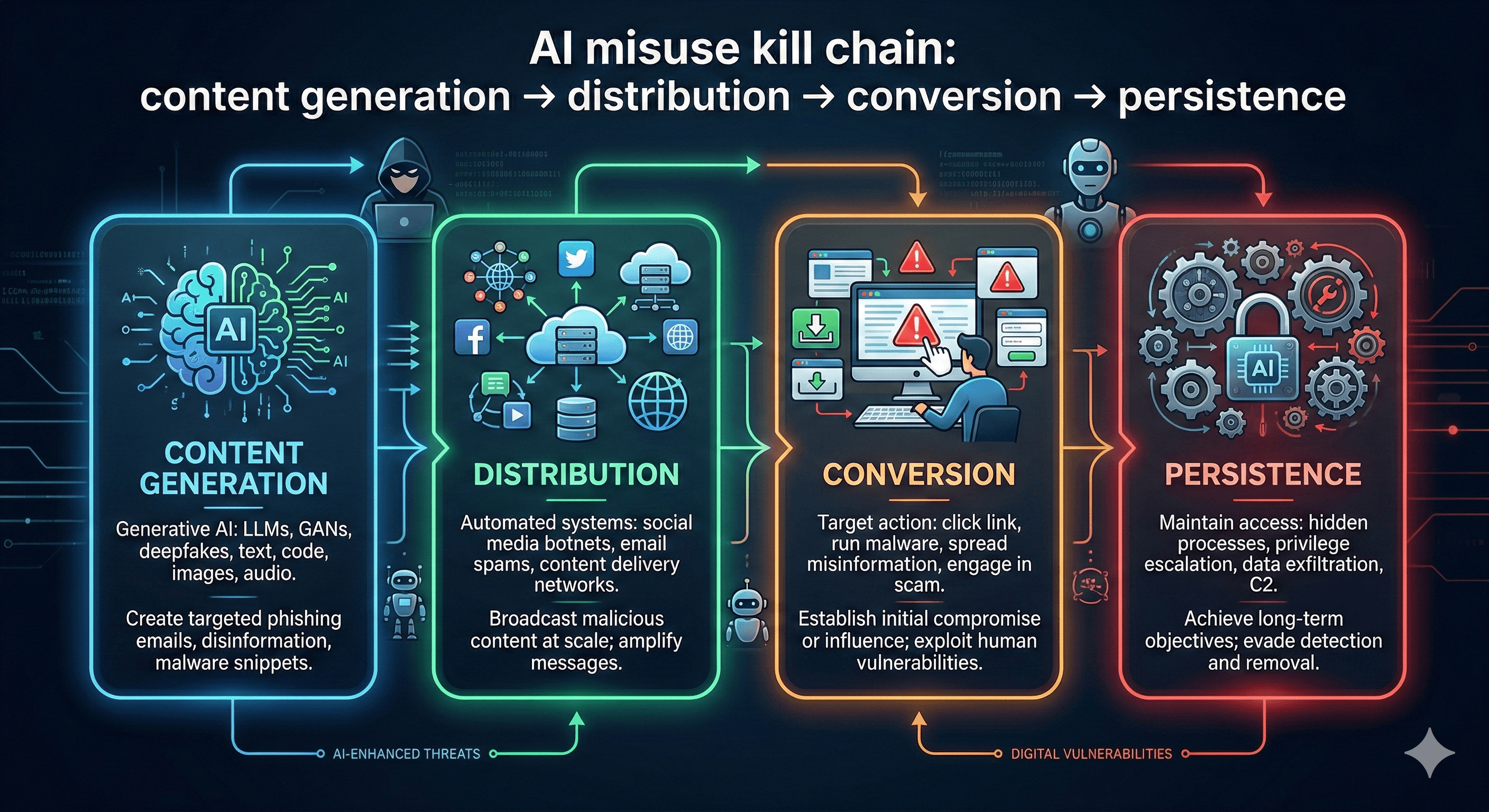

Malicious AI use is when attackers combine AI models with websites, social media and other tooling to scale phishing, fraud, misinformation or cyber operations. Effective defence relies on layered detection—monitoring behaviour, content signals and infrastructure—plus controls like identity hardening, policy enforcement, and rapid incident response.

Malicious use of AI isn’t a sci‑fi scenario where a model “hacks you”. In the real world, threat actors tend to use AI as an accelerator—pairing it with websites, social platforms, fake personas, and conventional cyber tooling to make attacks faster, cheaper and harder to spot.

Recent threat intelligence reports show a consistent pattern: AI helps criminals research targets, write convincing lures, generate content at scale, and iterate quickly—but the operation still relies on distribution channels (social, email, ads) and infrastructure (domains, hosting, accounts).

This is good news for defenders: if the attack still needs accounts, traffic, and behaviour, you can still detect it.

How attackers are leveraging AI through websites and social platforms

1) Phishing and fraud that “shape-shifts”

AI improves the quality and volume of social engineering. Instead of sending one generic lure, attackers can generate thousands of variations and adjust content based on the victim’s role, industry, region, and device. Some campaigns also use dynamic phishing pages that change after delivery.

What to look for: sudden increases in lookalike domains, high-velocity link creation, and multi-variant message clusters that share the same conversion infrastructure.

2) Influence and misinformation operations

AI makes it easier to create persuasive posts, profiles, comments, and even synthetic imagery—then distribute them via coordinated networks. Researchers have warned about AI-driven “bot swarms” that mimic human behaviour and infiltrate communities.

What to look for: coordination signals (timing, repetition, shared URLs), unnatural account growth patterns, and content reuse across multiple identities.

3) Reconnaissance and operational support

Even when AI isn’t writing malware, it’s often used for rapid research: summarising public information, drafting scripts, troubleshooting, and accelerating planning.

What to look for: spikes in automated browsing and scraping, unusual tooling fingerprints, and repeated access attempts to public documentation, support portals, or login flows.

4) Deepfakes and synthetic media in the “trust layer”

Synthetic voice, video and imagery are increasingly used to increase credibility: fake leaders, fake customer testimonials, fake screen recordings, or “proof” of an event.

What to look for: verification failures, mismatched metadata, and sudden new communication channels (e.g., ‘urgent’ voice note from a new number).

Why detection is harder (and what still works)

AI changes the economics of attacks—content can be created faster and tailored more easily. But the best reports on misuse consistently emphasise that AI sits inside a wider system of accounts, infrastructure, and distribution.

That means defence should focus on the parts attackers can’t avoid:

identity and access (accounts must log in)

infrastructure (domains must resolve)

distribution (posts/emails must be sent)

conversion (users must click, pay, share, or install)

A practical detection and defence playbook

1) Monitor for behavioural anomalies (not just “AI content”)

Content classifiers can help, but attackers can evade them. Behaviour is harder to hide.

Prioritise:

new account creation bursts

impossible travel / login anomalies

high-volume posting, commenting, or DM activity

rapid domain registration and redirects

repeated failed auth attempts and MFA fatigue patterns

2) Harden identity: make account takeover expensive

Most AI-enabled attacks still rely on compromised or fake accounts.

Minimum controls:

phishing-resistant MFA (where feasible)

conditional access policies

privileged access management

strong verification for changes to payment details

clear anti-impersonation policies for executives

3) Protect your brand surface

If attackers can’t convincingly impersonate you, their conversion rates drop.

Practical steps:

monitor lookalike domains and certificate issuance

lock down social handles and verified accounts

publish official communication channels (and stick to them)

implement DMARC, DKIM and SPF for email authentication

4) Treat AI as part of the incident response plan

You need playbooks for:

AI-assisted phishing campaigns

synthetic media incidents

coordinated inauthentic behaviour

staff receiving ‘urgent’ voice/video instructions

Run tabletop exercises that include:

comms sign-off pathways

rapid takedown requests (domains, posts, ads)

escalation criteria and regulatory thresholds

5) Use “guardian” controls around AI tools

Enterprise AI use introduces new risks: prompt injection, data leakage, and misuse.

Controls to consider:

tool access policies (who can use what, for which tasks)

logging and audit trails for AI tool usage

red-teaming and abuse simulation

safety filters and output monitoring where supported

6) Collaborate: defence improves with shared signals

The strongest defenders collaborate across:

internal teams (SOC, comms, legal, HR)

platform providers (social networks, email providers)

vendors and threat intel communities

ethics and governance experts for policy and training

Collaboration matters because the threat evolves quickly, and attackers reuse infrastructure across targets.

What this means for your organisation

If you’re trying to reduce risk in 2026, focus on layered controls that do not depend on spotting “AI-generated text”. Detection and defence improves fastest when you:

reduce attacker access (identity hardening)

reduce attacker reach (brand surface controls)

reduce time-to-containment (playbooks and monitoring)

reduce human error (training designed around modern tactics)

How Generation Digital can help

Generation Digital supports organisations building practical AI security and governance—without blocking innovation.

We can help you:

assess your exposure (tools, data, identity, surface area)

improve detection with monitoring and reporting

create playbooks for AI-assisted phishing and misinformation

train teams on real-world attack patterns

align AI use to governance and compliance requirements

Summary

Malicious AI use typically combines AI models with websites and social platforms to scale social engineering, misinformation, and cyber operations. The best defence is layered: monitor behaviour and infrastructure, harden identity, protect your brand surface, and build incident response playbooks that include synthetic media and AI-enabled campaigns.

Next steps: If you want to strengthen detection and response for AI-enabled threats, talk to Generation Digital about a security and governance review.

FAQs

Q1: What are the main threats posed by malicious AI?

Malicious AI can scale phishing and fraud, generate misinformation at volume, support reconnaissance, and enable synthetic media (deepfake voice/video) that undermines trust.

Q2: How can organisations detect malicious AI activities?

Focus on behavioural and infrastructure signals: account anomalies, high-velocity posting, lookalike domains, unusual redirects, and coordinated network behaviour—then combine that with content analysis where appropriate.

Q3: Why is collaboration important in AI defence?

Because attackers reuse infrastructure and tactics across platforms. Shared intelligence, faster takedowns, and cross-functional response (security, comms, legal) significantly reduce time-to-containment.

Q4: Should we rely on AI-content detectors?

No. Treat them as one signal among many. Behavioural, identity and infrastructure controls are more resilient against evasion.

Q5: How do we prepare for deepfake incidents?

Define a verification protocol (call-back rules, approval steps for payments), train exec teams, and create a comms plan for rapid response and public clarification.

Get weekly AI news and advice delivered to your inbox

By subscribing you consent to Generation Digital storing and processing your details in line with our privacy policy. You can read the full policy at gend.co/privacy.

Generation

Digital

UK Office

Generation Digital Ltd

33 Queen St,

London

EC4R 1AP

United Kingdom

Canada Office

Generation Digital Americas Inc

181 Bay St., Suite 1800

Toronto, ON, M5J 2T9

Canada

USA Office

Generation Digital Americas Inc

77 Sands St,

Brooklyn, NY 11201,

United States

EU Office

Generation Digital Software

Elgee Building

Dundalk

A91 X2R3

Ireland

Middle East Office

6994 Alsharq 3890,

An Narjis,

Riyadh 13343,

Saudi Arabia

Company No: 256 9431 77 | Copyright 2026 | Terms and Conditions | Privacy Policy